If we only allow our model to see Paris, but nothing else, we will miss out on the important information that the word to often times appears with LOCATIONs. Let's say our model is trying to generate the correct label 1 for Paris. In one pass (meaning a call to forward()), our model will try to generate the correct label for one word. The corresponding training label for this sentence is 0, 0, 0, 0, 1 since only Paris, the last word, is a LOCATION. This is the function that is called when a parameter is passed to our module, such as in model(x).Įarlier we mentioned that our task was called Word Window Classification because our model is looking at the surroundings words in addition to the given word when it needs to make a prediction.įor example, let's take the sentence "We always come to Paris". Tensors are not parameters, but they can be turned into parameters if they are wrapped in nn.Parameter class.Īll classes extending nn.Module are also expected to implement a forward(x) function, where x is a tensor. All the class attributes we define which are nn module objects are treated as parameters, which can be learned during the training. We can then initialize our parameters in the _init_ function, starting with a call to the _init_ function of the super class.

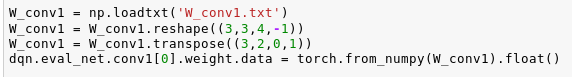

To create a custom module, the first thing we have to do is to extend the nn.Module. You will be practicing these in the later assignment. For example, we can build a the nn.Linear (which also extends nn.Module) on our own using the tensor introduced earlier! We can also build new, more complex modules, such as a custom neural network. Instead of using the predefined modules, we can also build our own by extending the nn.Module class. If you would like to match the way your tensor is stored in the memory to how it is used, you can use the contiguous() method. The difference here isn't too important for basic tensors, but if you perform operations that make the underlying storage of the data non-contiguous (such as taking a transpose), you will have issues using view(). Reshape() calls view() internally if the data is stored contiguously, if not, it returns a copy. They just change the meta information about out tensor, so that when we use it we will see the elements in the order we expect. This happens because some methods, such as transpose() and view(), do not actually change how our data is stored in the memory. In simple terms, contiguous means that the way our data is laid out in the memory is the same as the way we would read elements from it. You can refer to this StackOverflow answer for more information. There is a subtle difference between reshape() and view(): view() requires the data to be stored contiguously in the memory. We can also use torch.reshape() method for a similar purpose. Once you are done with the installation process, run the following cell: Alternatively, you can open this notebook using Google Colab, which already has PyTorch installed in its base kernel. To install PyTorch, you can follow the instructions here. Now that we have learned enough about the background of PyTorch, let's start by importing it into our notebook. If you would like to learn more about the differences between the two, you can check out this blog post. Although TensorFlow is more widely preferred in the industry, PyTorch is often times the preferred machine learning framework for researchers. At the time of its release, PyTorch appealed to the users due to its user friendly nature: as opposed to defining static graphs before performing an operation as in TensorFlow, PyTorch allowed users to define their operations as they go, which is also the approached integrated by TensorFlow in its following releases. PyTorch started of as a more flexible alternative to TensorFlow, which is another popular machine learning framework. PyTorch is a machine learning framework that is used in both academia and industry for various applications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed